This tutorial gives a brief introduction to Approximate Bayesian Inference via Markov Chain Monte Carlo.

Author: Bjarne C. Hiller

The Observation¶

Great news, everyone! We just made a new observation !

We know, that this observation was generated by a process that depends on another variable - let’s call it . Unfortunately, we cannot observe directly - it is hidden (latent). Can we infer some information about the latent variable from the observation we made?

Yes, using probability theory and Bayes’ law, we know that:

We are interested in the probability distribution , i.e., the probability of , posterior to observing . This problem is known as Bayesian inference.

Alright, the equation seems straightforward enough - so, where is the catch?

The Catch¶

Let’s say we just define the distribution from our assumptions prior to observing - a uniform distribution seems reasonable enough! Then, we describe the process which generates from , providing us implicitly with the likelihood : information, how likely is given .

But now it get’s tricky: How do we actually know the probability of observing evidence ? We could observe some more instantiations of , and estimate the density of . But depending on the density and structure of , it could require a ton of observations to guarantee that our approximation gets atleast somewhat close to the true density - especially, if there is a chance for very rare events.

Alternatively, we could just compute from the likelihood and the prior, which we already have - because we defined them ourselves. How convenient!

Using the definition of conditional probabilites, for a single discrete variable with possible values, Bayes’ Law then would transform into:

Great, so this is something we could just compute by iterating over z and summing up the individual values!

However, if we’re dealing with a latent continuous random variable , i.e., with values from a continuous domain, we need to put more effort in and integrate over instead. Additionally, we might be even looking at a vector of latent variables latent variables , which results in ugly, nested integrals...

Then, the computation becomes intractible. Or, simply put: it takes very, very long to compute.

The Solution¶

Since we are very, very impatient, we don’t want to wait for our inferred posterior probability . Maybe, we don’t actually need the result to be too accurate - then, we can sacrifice precision against speed!

This is known as Approximate Bayesian Inferrence, and historically, there are two main approaches to it:

1. Variational Inference (cool: VI)¶

Variational Inference methods usually restricts the posterior to be a distribution from a family of probability distributions - for example, a Normal distribution. This reduces the problem from computing the density on the complete domain to just estimating the parameters (like mean and variance) of the posterior distribution. Usually, the parameters are optimized by maximizing an objective function, like the Evidence Lower Bound (cool: ELBO), e.g., via gradient descent. Since those methods perform inference by finding the maximum of an objective function, they are called variational (see calculus of variations). You might have also already heard of Variational Autoencoders? Their latent representations describe parameters of a posterior distribution, so the encoder actually learns to perform Variational Inference.

2. Markov Chain Monte Carlo (cool: MCMC)¶

A Monte Carlo algorithm usually finds an approximation of the result by sampling from a random process. The name Monte Carlo was introduced by Stanisław Ulam, referencing the Monte Carlo Casino in Monaco and the gambling nature of the algorithm. Similarly, Markov Chain Monte Carlo algorithms approximate a distribution by repeatly taking random steps in a Markov Chain. The approximation of the density usually becomes more accurate with the number of steps, i.e., the length of the chain. Therefore, MCMC allows sampling from probability distributions, even if the density function of the distribution itself isn’t known.

MCMC methods can be used for Approximate Bayesian Inference by sampling from the posterior distribution.

In the remainder of this tutorial, we will implement a simple version of the Metropolis-Hastings Algorithm, which allows us to sample from the posterior distribution.

Implementation¶

Setting¶

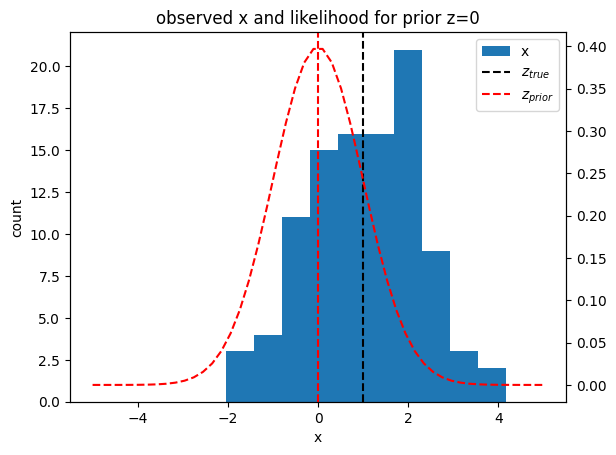

In our scenario, we observed values for a random variable . We know, that x is generated by a normal distribution with a scale of 1, and an unknown mean, which is our latent variable .

Our prior assumption is, that itself is normal distributed .

Let’s generate some new observation data from the true data distribution :

Notebook Cell

import numpy as np

import pandas as pd

import scipy.stats as stats

import matplotlib.pyplot as plt

from matplotlib.animation import FuncAnimation

from IPython.display import HTML

from tqdm import trange

rng = np.random.default_rng(19)

N = 100

# true data distribution

Z_TRUE_MU = 1

Z_TRUE_SIGMA = 1

# for normal prior

Z_PRIOR_MU = 0

Z_PRIOR_SIGMA = 1

# for uniform prior

Z_PRIOR_LOW = -1.5

Z_PRIOR_HIGH = 1.5

def get_data(n):

# sample z from true distribution

z = rng.normal(Z_TRUE_MU, Z_TRUE_SIGMA, n)

return rng.normal(z, 1)

x = get_data(N)

plt.hist(x, label="x")

plt.axvline(Z_TRUE_MU, color="k", linestyle="--", label="$z_{true}$")

plt.axvline(0, color="r", linestyle="--", label="$z_{prior}$")

plt.legend()

plt.title("observed x and likelihood for prior z=0");

plt.xlabel("x")

plt.ylabel("count")

plt.twinx()

x_pdf = np.linspace(-5, 5)

y_pdf = stats.norm(Z_PRIOR_MU, 1).pdf(x_pdf)

plt.plot(x_pdf, y_pdf, linestyle="--", color="r");

The question now is: Given our observation data , can we update the distribution for the mean parameter to get the posterior ?

Metropolis-Hastings Algorithm¶

The Metropolis-Hastings algorithm uses MCMC to draw random samples from distributions where direct sampling is difficult - for example, a unknown, potentially high-dimensional posterior distribution. Given a previous sample, propose a new one based on a conditional proposal distribution. Then, decide with an acceptande distribution, if the new sample should be accepted or rejected. The resulting sequence of samples can be used to approximate the distribution. Therefore, the algorithm can be used for approximate Bayesian inference - or alternatively, to approximate integrals.

Fun Fact: There exists some controversy regarding the credit of the original Metropolis algorithm. According to Marshall Rosenbluth (one of the original authors), Edward Teller posed the original problem, Rosenbluth himself solved it, and his wife Arianna Rosenbluth implemented the algorithm - while Metropolis only provided computer time.

We will start by implementing the Metropolis-Hastings Algorithm. We only need 2 things:

A (conditional) proposal density , that generates new samples given the previous sample

An acceptentance ratio , that tells us if the new sample should be accepted or rejected

Let’s start with the proposal density: This one is usually easy, as a common choice is a Gaussian Distribution centered at the previous sample . This makes the sequence of random samples into a Gaussian Random Walk.

We will implement this as a function get_proposal, which gives us a new sample:

def get_proposal(z):

return rng.normal(z, 1)Now the apparently more complex part: The acceptance ratio .

The good news: All we need is a function , which is required to be only proportional to the true posterior density . “Great,” you might say, “how does this help us? We don’t know the true posterior, since this is actually what we want to get out of this!”

Let’s have another look at Bayes Law:

While it is true that we don’t know the true posterior - if we cut the intractible normalization term, we actually end up with our desired proportional function !

And here comes the neat part of the Metropolis-Hastings algorithm: We can directly compute the acceptance ratio from this proportional function like this:

This can be interpreted as: the more likely it is that the observed data was generated using the new compared to our current , the more likely it will be accepted.

Usually, the acceptance ratio is often clipped to a maximum value of 1, resulting in:

Let’s implement the prior and likelihood function and compute the likelihood of our data given :

def prior(z):

# normal prior

return stats.norm(Z_PRIOR_MU, Z_PRIOR_SIGMA).pdf(z)

def prior(z):

# uniform prior

# for some weird reason, scipy specifies uniform distributions with loc,loc+scale

# instead of default parameters [low, high]...

return stats.uniform(Z_PRIOR_LOW, Z_PRIOR_HIGH-Z_PRIOR_LOW).pdf(z)

def likelihood(x, z):

return np.prod(stats.norm(z, 1).pdf(x))

likelihood(x, 0)np.float64(2.910161102369844e-96)Wait - why is the likelihood 0? Well, we generated a lot of samples - since samples are independent of each other, we get the joint likelihood by multiplying the individual likelihoods together. Leaving us with the product of many small numbers in , which is approaching 0 faster than our computer’s floating point precision can keep up with...

Luckily for us, there is again another easy fix: Just convert everything into log space, and we will probably be fine:

def log_prior(z):

# normal prior

return stats.norm(Z_PRIOR_MU, Z_PRIOR_SIGMA).logpdf(z)

def log_prior(z):

# uniform prior

return stats.uniform(Z_PRIOR_LOW, Z_PRIOR_HIGH-Z_PRIOR_LOW).logpdf(z)def log_likelihood(x, z):

return np.sum(stats.norm(z, 1).logpdf(x))def get_log_acceptance(z_new, z_old, x):

p_new = log_likelihood(x, z_new) + log_prior(z_new)

p_old = log_likelihood(x, z_old) + log_prior(z_old)

return np.minimum(0, p_new - p_old)def get_next_sample(z, x):

z_new = get_proposal(z)

accepted = False

while not accepted:

z_new = get_proposal(z)

log_alpha = get_log_acceptance(z_new, z, x)

t = rng.random()

if np.log(t) <= log_alpha:

accepted = True

return z_newdef get_chain(z, x, n=100):

zs = [z]

for i in trange(n):

z = get_next_sample(z, x)

zs.append(z)

return zschain = get_chain(0, x, n=100) 9%|▉ | 9/100 [00:00<00:01, 83.56it/s]100%|██████████| 100/100 [00:01<00:00, 73.09it/s]

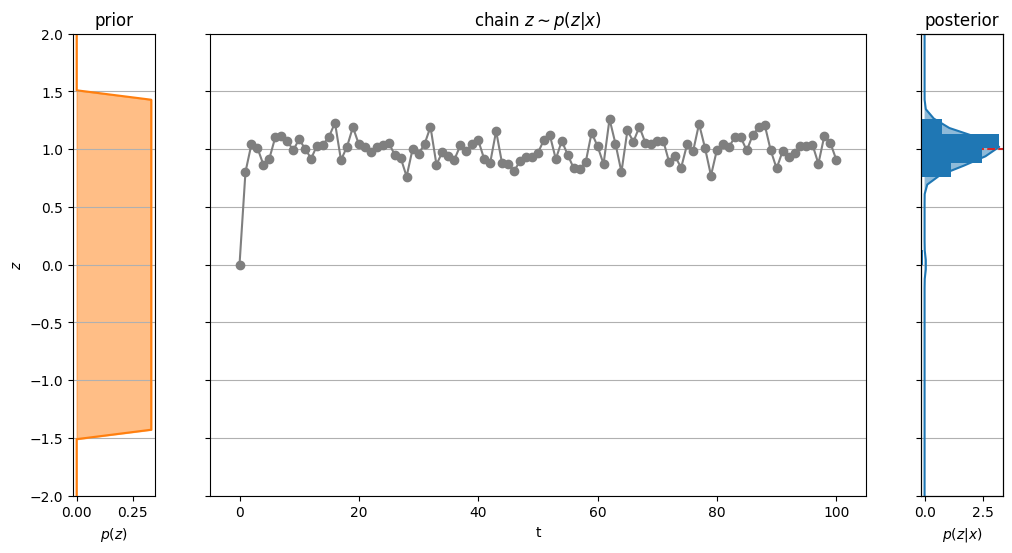

from scipy.stats import gaussian_kde

def plot_chain(chain, y_min=-2, y_max=2):

artists = {}

fig, axs = plt.subplots(1, 3, sharey=True, figsize=(12,6), width_ratios=[1,8,1])

# plot prior

y_prior = np.linspace(y_min, y_max)

x_prior = prior(y_prior)

axs[0].plot(x_prior, y_prior, color="tab:orange")

axs[0].fill_betweenx(y_prior, 0, x_prior, color="tab:orange", alpha=0.5)

axs[0].set_title("prior")

axs[0].set_xlabel("$p(z)$")

axs[0].set_ylabel("$z$")

axs[0].grid(True, axis="y")

# plot chain

artists["chain_line"] = axs[1].plot(chain, marker="o", color="tab:grey")[0]

axs[1].set_xlabel("t")

axs[1].set_title("chain $z \\sim p(z|x)$")

axs[1].grid(True, axis="y")

# plot posterior

axs[2].set_xlabel("$p(z|x)$")

x_kde = gaussian_kde(chain).pdf(y_prior)

artists["kde_line"] = axs[2].plot(x_kde, y_prior)[0]

artists["kde_fill"] = axs[2].fill_betweenx(y_prior, 0, x_kde, alpha=0.5)

axs[2].set_title("posterior")

axs[2].grid(True, axis="y")

axs[2].axhline(Z_TRUE_MU, linestyle="--", color="tab:red", label="true")

# plot histogram of posterior

ax_hist = axs[2].twiny()

artists["hist"] = ax_hist.hist(chain, orientation="horizontal")[2]

ax_hist.tick_params(top=False, labeltop=False)

plt.ylim(y_min, y_max)

return fig, axs, artistsfig, axs, artists = plot_chain(chain);

Our initial sample (i.e., the starting point of the chain) might be very unlikely under the true posterior. Therefore, the first samples in the chain are usually discarded, as it can take some time until the samples actually fit the posterior. This is called the burn-in period.

from matplotlib.path import Path

def get_animation(fig, axs, artists):

def update(i):

artists["chain_line"].set_data(np.arange(i), chain[:i])

patches = artists["hist"].patches

counts, bins = np.histogram(chain[:i], bins=len(patches))

heights = np.diff(bins)

# update kde

_, y_kde = artists["kde_line"].get_data()

x_0 = np.zeros_like(y_kde)

if i < 2:

x_kde = x_0

else:

x_kde = gaussian_kde(chain[:i]).pdf(y_kde)

artists["kde_line"].set_data(x_kde, y_kde)

# update kde fill

path = artists["kde_fill"].get_paths()[0]

v_x = np.hstack([x_kde[0], x_0, x_0[-1], x_kde[::-1], x_kde[0]])

v_y = np.hstack([y_kde[0], y_kde, y_kde[-1], y_kde[::-1], y_kde[0]])

vertices = np.vstack([v_x, v_y]).T

codes = np.array(

[Path.MOVETO] +

[Path.LINETO] * (2*len(x_kde)+1) +

[Path.CLOSEPOLY]

).astype("uint8")

path.vertices = vertices

path.codes = codes

# update histogram

for patch, count, y_kde, height in zip(patches, counts, bins[:-1], heights):

patch.set_y(y_kde)

patch.set_height(height)

patch.set_width(count)

return [artists["chain_line"], artists["kde_line"], artists["kde_fill"], *patches]

return FuncAnimation(

fig, update,

frames=len(chain),

blit=True,

repeat=False,

interval=1/100

)

anima = get_animation(fig, axs, artists)

HTML(anima.to_jshtml(fps=30))anima.save("figures/mcmc.gif")MovieWriter ffmpeg unavailable; using Pillow instead.

References¶

Links¶

Papers¶

Bibliography¶

@techreport{osti_4390578,

author = {Metropolis, Nicholas and Rosenbluth, Arianna W. and Rosenbluth, Marshall N. and Teller, Augusta H. and Teller, Edward},

title = {Equation of state calculations by fast computing machines},

institution = {Los Alamos Scientific Lab., Los Alamos, NM (United States); Univ. of Chicago, IL (United States)},

annote = {},

doi = {10.2172/4390578},

url = {https://www.osti.gov/biblio/4390578},

place = {United States},

year = {1953},

month = {03}

}

@misc{hoffman2013stochasticvariationalinference,

title={Stochastic Variational Inference},

author={Matt Hoffman and David M. Blei and Chong Wang and John Paisley},

year={2013},

eprint={1206.7051},

archivePrefix={arXiv},

primaryClass={stat.ML},

url={https://arxiv.org/abs/1206.7051},

}

@misc{kingma2022autoencodingvariationalbayes,

title={Auto-Encoding Variational Bayes},

author={Diederik P Kingma and Max Welling},

year={2022},

eprint={1312.6114},

archivePrefix={arXiv},

primaryClass={stat.ML},

url={https://arxiv.org/abs/1312.6114},

}- Hoffman, M., Blei, D. M., Wang, C., & Paisley, J. (2012). Stochastic Variational Inference. arXiv. 10.48550/ARXIV.1206.7051

- Kingma, D. P., & Welling, M. (2022). Auto-Encoding Variational Bayes. arXiv. 10.48550/arXiv.1312.6114

- Metropolis, N., Rosenbluth, A. W., Rosenbluth, M. N., Teller, A. H., & Teller, E. (1953). Equation of state calculations by fast computing machines. Office of Scientific. 10.2172/4390578